Most recent TBTK release at the time of writing: v1.1.1

Updated to work with: v2.0.0

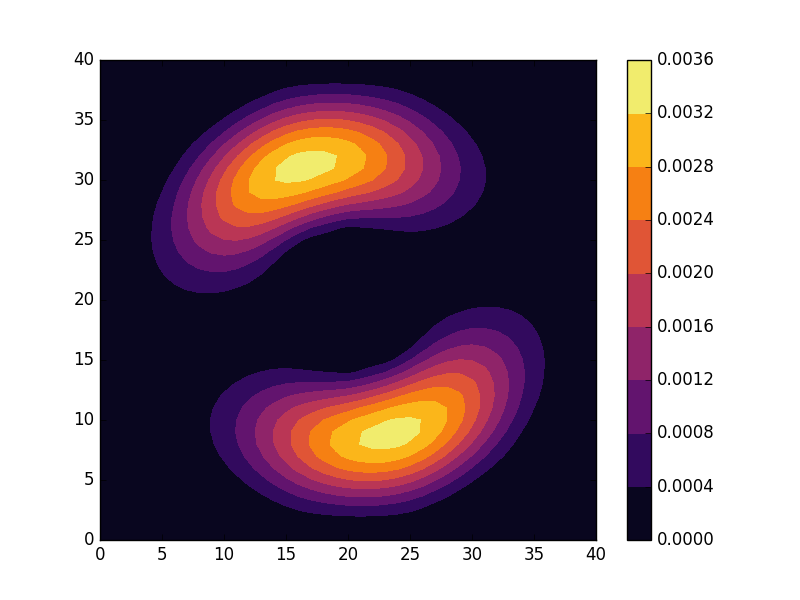

In condensed matter physics, the electronic band structure is one of the most commonly used tools for understanding the electronic properties of a material. Here we take a look at how to set up a tight-binding model of graphene and calculate the band structure along paths between certain high symmetry points in the Brillouin zone. We also calculate the density of states (DOS). A basic understanding of band theory is assumed and if further background is required a good starting point is to look at the nearly free electron model, Bloch waves, Bloch’s theorem, and the Brillouin zone.

Physical description

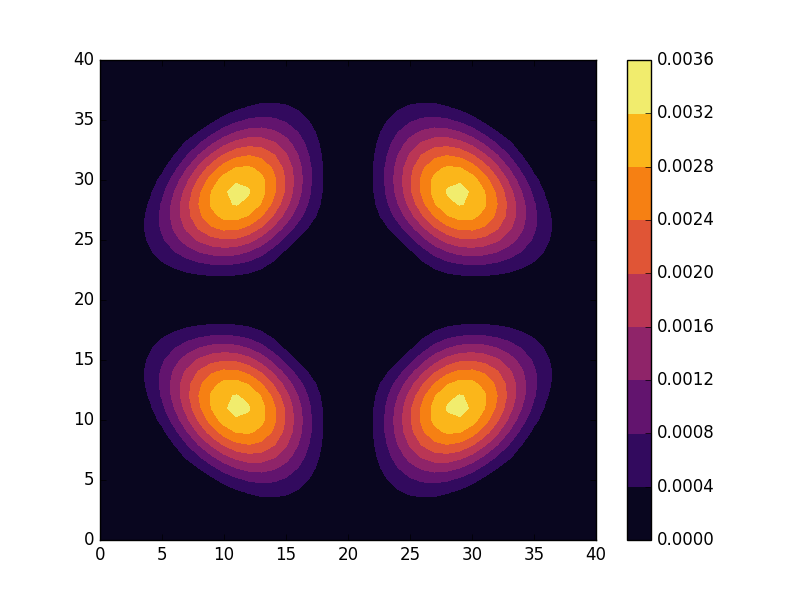

Lattice

Graphene has a hexagonal lattice that can be described using the lattice vectors ![]() ,

, ![]() , and

, and ![]() . We set the lattice constant to

. We set the lattice constant to ![]() , which only is meant to reflect the correct order of magnitude. Strictly speaking, only two two-dimensional vectors are required to describe the lattice. However, we have here made the vectors three-dimensional and included a third lattice vector

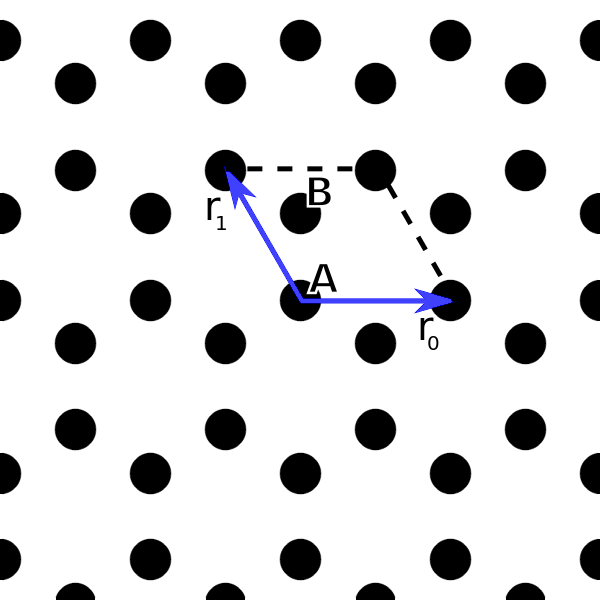

, which only is meant to reflect the correct order of magnitude. Strictly speaking, only two two-dimensional vectors are required to describe the lattice. However, we have here made the vectors three-dimensional and included a third lattice vector ![]() that is perpendicular to the lattice to simplify the calculation of the reciprocal lattice vectors. The resulting lattice is shown in the image below.

that is perpendicular to the lattice to simplify the calculation of the reciprocal lattice vectors. The resulting lattice is shown in the image below.

Indicated in the image is also the unit cell and the A and B sublattice sites within the unit cell. We further define

![Rendered by QuickLaTeX.com \[ \begin{aligned} \mathbf{r}_{AB}^{(0)} =& \frac{\mathbf{r}_0 + 2\mathbf{r}_1}{3},\\ \mathbf{r}_{AB}^{(1)} =& -\mathbf{r}_1 + \mathbf{r}_{AB}^{(0)},\\ \mathbf{r}_{AB}^{(2)} =& -\mathbf{r}_0 - \mathbf{r}_1 + \mathbf{r}_{AB}^{(0)}, \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-78cf9c0b57f89e789f9743d503fa0706_l3.png)

which can be verified to be the vector from A to its three nearest neighbor B sites.

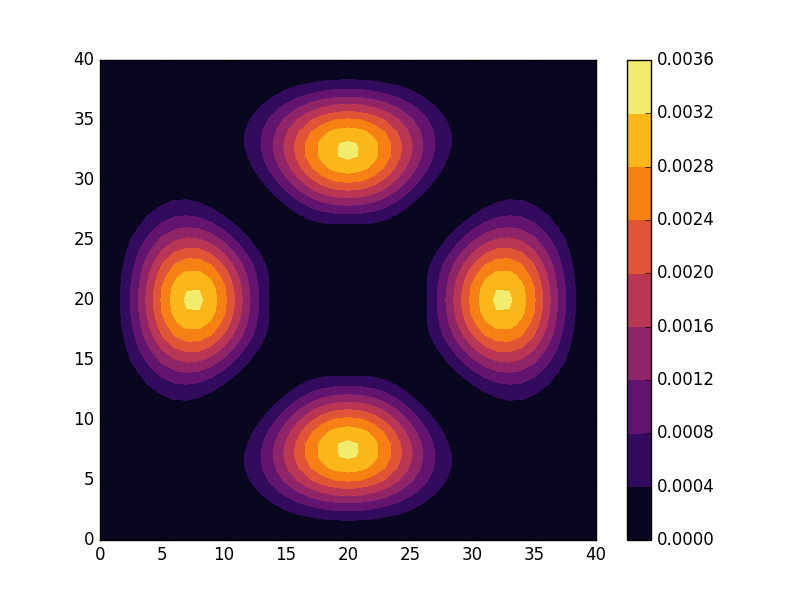

Brillouin zone

The reciprocal lattice vectors can now be calculated using

![Rendered by QuickLaTeX.com \[ \begin{aligned} \mathbf{k}_0 =& \frac{2\pi \mathbf{r}_1\times\mathbf{r}_2}{\mathbf{r}_0\cdot\mathbf{r}_1\times\mathbf{r}_2},\\ \mathbf{k}_1 =& \frac{2\pi \mathbf{r}_2\times\mathbf{r}_0}{\mathbf{r}_1\cdot\mathbf{r}_2\times\mathbf{r}_0},\\ \mathbf{k}_2 =& \frac{2\pi\mathbf{r}_0\times\mathbf{r}_1}{\mathbf{r}_2\cdot\mathbf{r}_0\times\mathbf{r}_1}. \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-e9ec406b51d6e8a5e27c358752729d69_l3.png)

Here ![]() just like

just like ![]() is related to the z-direction and will be dropped from here on. The two remaining reciprocal lattice vectors

is related to the z-direction and will be dropped from here on. The two remaining reciprocal lattice vectors ![]() and

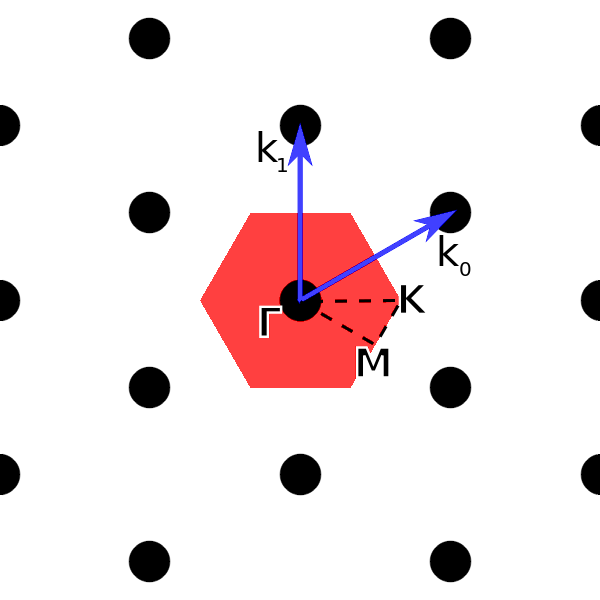

and ![]() defines the reciprocal lattice of interest to us. Below the reciprocal lattice is shown.

defines the reciprocal lattice of interest to us. Below the reciprocal lattice is shown.

In the the image above we have also indicated the first Brillouin zone in red, and outlined the path ![]() along which the band structure will be calculated. The high symmetry points can be verified to be given by

along which the band structure will be calculated. The high symmetry points can be verified to be given by

![Rendered by QuickLaTeX.com \[ \begin{aligned} \mathbf{\Gamma} =& (0, 0, 0),\\ \mathbf{M} =& (\frac{\pi}{a}, \frac{-\pi}{\sqrt{3}a}, 0),\\ \mathbf{K} =& (\frac{4\pi}{3a}, 0, 0). \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-b5445661f8865929eb0d02a1b38f213e_l3.png)

Hamiltonian

We will use a simple tight-binding model of graphene of the form

![]()

Here ![]() is a hopping amplitude connecting nearest neighbor sites which we take to be

is a hopping amplitude connecting nearest neighbor sites which we take to be ![]() (again, only correct up to order of magnitude). The index

(again, only correct up to order of magnitude). The index ![]() runs over the unit cells, while

runs over the unit cells, while ![]() takes on the three values that makes

takes on the three values that makes ![]() the nearest neighbor of

the nearest neighbor of ![]() .

.

Because of translation invariance, it is possible to transform the expression above to a simple expression in reciprocal space. To do so we write

![Rendered by QuickLaTeX.com \[ \begin{aligned} c_{\mathbf{i},A} =& \frac{1}{\sqrt{N}}\sum_{\mathbf{k}}c_{\mathbf{k},A}e^{ik\cdot \mathbf{R}_{\mathbf{i}}},\\ c_{\mathbf{i}+\boldsymbol\delta},B} =& \frac{1}{\sqrt{N}}\sum_{\mathbf{k}}c_{\mathbf{k},B}e^{i\mathbf{k}\cdot \left(\mathbf{R}_{\mathbf{i}} + \mathbf{r}_{\boldsymbol\delta}\right)}. \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-09bdc2b0dc0d16dbb74d1521382c2074_l3.png)

Here ![]() is the position of the A atom in unit cell

is the position of the A atom in unit cell ![]() , while

, while ![]() .1

.1

Inserting this into the expression above we get

![Rendered by QuickLaTeX.com \[ \begin{aligned} H =& \frac{-t}{N}\sum_{i\mathbf{k}\mathbf{k}'\boldsymbol{\delta}}c_{\mathbf{k},A}^{\dagger}c_{\mathbf{k}',B}e^{-i\mathbf{k}\cdot \mathbf{R}_{\mathbf{i}}}e^{i\mathbf{k}'\cdot\left(\mathbf{R}_{\mathbf{i}} + \mathbf{r}_{\boldsymbol{\delta}}\right)} + H.c\\ =& -t\sum_{\mathbf{k}\boldsymbol{\delta}}c_{\mathbf{k},A}^{\dagger}c_{\mathbf{k},B}e^{i\mathbf{k}\cdot \mathbf{r}_{\boldsymbol{\delta}}} + H.c\\ =& -t\sum_{\mathbf{k}}c_{\mathbf{k},A}^{\dagger}c_{\mathbf{k},B}\left(e^{i\mathbf{k}\cdot\mathbf{r}_{AB}^{(0)}} + e^{i\mathbf{k}\cdot\mathbf{r}_{AB}^{(1)}} + e^{i\mathbf{k}\cdot\mathbf{r}_{AB}^{(2)}}\right) + H.c. \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-0e966222b1b37543d4062c651033c281_l3.png)

In the second line we have summed over ![]() and used the delta function property of

and used the delta function property of ![]() to eliminat

to eliminat ![]() , while in the last line we have made the sum over

, while in the last line we have made the sum over ![]() explicit.

explicit.

Implementation

Parameters

We are now ready to implement the calculation using TBTK. To do so, we begin by specifying the parameters to be used in the calculation

//Set the natural units for this calculation.

UnitHandler::setScales({

"1 rad", "1 C", "1 pcs", "1 eV",

"1 Ao", "1 K", "1 s"

});

//Define parameters.

double t = 3; //eV

double a = 2.5; //Ångström

unsigned int BRILLOUIN_ZONE_RESOLUTION = 1000;

vector<unsigned int> numMeshPoints = {

BRILLOUIN_ZONE_RESOLUTION,

BRILLOUIN_ZONE_RESOLUTION

};

const int K_POINTS_PER_PATH = 100;

const double ENERGY_LOWER_BOUND = -10;

const double ENERGY_UPPER_BOUND = 10;

const int ENERGY_RESOLUTION = 1000;

In lines 2-5, we specify what units that dimensionful parameters should be understood in terms of. For our purposes here, this means that energies and distances are measured in electron Volts (eV) and Ångström (Ao), respectively.

We next specify the value for ![]() and

and ![]() as given in the physical description above. The value ‘BRILLOUIN_ZONE_RESOLUTION’ determines the number of subintervals each of the reciprocal lattice vectors is divided into when generating a mesh for the Brillouin zone. ‘numMeshPoints’ combine this information into a vector. Similarly, ‘K_POINTS_PER_PATH’ determines the number of k-points to use along each of the paths

as given in the physical description above. The value ‘BRILLOUIN_ZONE_RESOLUTION’ determines the number of subintervals each of the reciprocal lattice vectors is divided into when generating a mesh for the Brillouin zone. ‘numMeshPoints’ combine this information into a vector. Similarly, ‘K_POINTS_PER_PATH’ determines the number of k-points to use along each of the paths ![]() ,

, ![]() , and

, and ![]() when calculating the band structure. Finally, the energy window that is used to calculate the DOS is specified in the three last lines.

when calculating the band structure. Finally, the energy window that is used to calculate the DOS is specified in the three last lines.

Real and reciprocal lattice

The lattice vectors ![]() ,

, ![]() , and

, and ![]() are now defined as follows.

are now defined as follows.

Vector3d r[3];

r[0] = Vector3d({a, 0, 0});

r[1] = Vector3d({-a/2, a*sqrt(3)/2, 0});

r[2] = Vector3d({0, 0, a});

The nearest neighbor vectors ![]() ,

, ![]() , and

, and ![]() are similarly defined using

are similarly defined using

Vector3d r_AB[3]; r_AB[0] = (r[0] + 2*r[1])/3.; r_AB[1] = -r[1] + r_AB[0]; r_AB[2] = -r[0] - r[1] + r_AB[0];

Next, the reciprocal lattice vectors are calculated using the expressions at the beginning of the Brillouin zone section.

Vector3d k[3];

for(unsigned int n = 0; n < 3; n++){

k[n] = 2*M_PI*r[(n+1)%3]*r[(n+2)%3]/(

Vector3d::dotProduct(

r[n],

r[(n+1)%3]*r[(n+2)%3]

)

);

}

Note that here ‘*’ indicates the cross product, while the scalar product between u and v is calculated using Vector3d::dotProduct(u, v).

Having defined the lattice vectors, we can now construct a Brillouin zone.

BrillouinZone brillouinZone(

{

{k[0].x, k[0].y},

{k[1].x, k[1].y}

},

SpacePartition::MeshType::Nodal

);

Here the first argument is a list of lattice vectors, while the second argument specifies the type of mesh that is going to be associated with this Brillouin zone. SpacePartition::MeshType::Nodal means that when the Brillouin zone is divided into small parallelograms, the mesh points will lie on the corners of these parallelograms. If SpacePartition::MeshType::Interior is used instead, the mesh points will lie in the middle of the parallelograms.

Having constructed a Brillouin zone, we finally generate a mesh by passing the number of segments to divide each reciprocal lattice vector to the following function.

vector<vector<double>> mesh

= brillouinZone.getMinorMesh(

numMeshPoints

);

Set up the model

The model is set up using the following code.

Model model;

for(unsigned int n = 0; n < mesh.size(); n++){

//Get Index representation of the current

//k-point.

Index kIndex = brillouinZone.getMinorCellIndex(

mesh[n],

numMeshPoints

);

//Calculate the matrix element.

Vector3d k({mesh[n][0], mesh[n][1], 0});

complex<double> h_01 = -t*(

exp(

-i*Vector3d::dotProduct(

k,

r_AB[0]

)

)

+ exp(

-i*Vector3d::dotProduct(

k,

r_AB[1]

)

)

+ exp(

-i*Vector3d::dotProduct(

k,

r_AB[2]

)

)

);

//Add the matrix element to the model.

model << HoppingAmplitude(

h_01,

{kIndex[0], kIndex[1], 0},

{kIndex[0], kIndex[1], 1}

) + HC;

}

model.construct();

Here the loop runs over each lattice point in the mesh. To understand line 4-7, we first note that the mesh contains k-points of the form ![]() with real numbers

with real numbers ![]() and

and ![]() . However, to specify a model, we need discrete indices with integer-valued subindices to label the points. Think of this as each k value being of the form

. However, to specify a model, we need discrete indices with integer-valued subindices to label the points. Think of this as each k value being of the form ![]() , where

, where ![]() is a real-valued vector while

is a real-valued vector while ![]() is an integer-valued vector that indexes the different k-points. The BrillouinZone solves this problem for us by automatically providing a mapping from continuous indices to discrete indices. Given a real index in the first Brillouin zone and information about the number of subdivisions of the lattice vectors, brillouinZone.getMinorCellIndex() finds the closest discrete point and returns its Index representation. This index can then be used to refer to the k-point whenever an Index object is required.

is an integer-valued vector that indexes the different k-points. The BrillouinZone solves this problem for us by automatically providing a mapping from continuous indices to discrete indices. Given a real index in the first Brillouin zone and information about the number of subdivisions of the lattice vectors, brillouinZone.getMinorCellIndex() finds the closest discrete point and returns its Index representation. This index can then be used to refer to the k-point whenever an Index object is required.

In line 10, the mesh point is converted to a Vector3d object that is then used in lines 11-30 to calculate the matrix element we derived in the section about the Hamiltonian. The matrix element, together with its Hermitian conjugate, is then fed to the model in lines 33-37. Here we construct the full index for the model by combining the integer kIndex[0] and kIndex[1] values, together with the sublattice index, into an index structure of the form {kx, ky, sublattice}. A zero in the sublattice index refers to an A site, while one refers to a B site. Finally, the model is constructed in line 39.

Selecting solver

We are now ready to solve the model and will use diagonalization to do so. In this case, we have a problem that is block-diagonal in the k-index. For this reason, we will use the solver Solver::BlockDiagonalizer that can take advantage of this block structure. To set up and run the diagonalization we write

Solver::BlockDiagonalizer solver; solver.setModel(model); solver.run();

We then set up the corresponding property extractor and configure the energy window using

PropertyExtractor::BlockDiagonalizer

propertyExtractor(solver);

propertyExtractor.setEnergyWindow(

ENERGY_LOWER_BOUND,

ENERGY_UPPER_BOUND,

ENERGY_RESOLUTION

);

Extracting the DOS

Extracting, smoothing, and plotting the DOS is now done as follows.

//Calculate the density of states (DOS).

Property::DOS dos

= propertyExtractor.calculateDOS();

//Smooth the DOS.

const double SMOOTHING_SIGMA = 0.03;

const unsigned int SMOOTHING_WINDOW = 51;

dos = Smooth::gaussian(

dos,

SMOOTHING_SIGMA,

SMOOTHING_WINDOW

);

//Plot the DOS.

Plotter plotter;

plotter.plot(dos);

plotter.save("figures/DOS.png");

Extract the band structure

We are now ready to calculate the band structure. To do so, we begin by defining the high symmetry points

Vector3d Gamma({0, 0, 0});

Vector3d M({M_PI/a, -M_PI/(sqrt(3)*a), 0});

Vector3d K({4*M_PI/(3*a), 0, 0});

These are then packed in pairs to define the three paths ![]() ,

, ![]() , and

, and ![]()

vector<vector<Vector3d>> paths = {

{Gamma, M},

{M, K},

{K, Gamma}

};

We now loop over each of the three paths and calculate the band structure at each k-point along these paths.

Array<double> bandStructure(

{2, 3*K_POINTS_PER_PATH},

0

);

Range interpolator(0, 1, K_POINTS_PER_PATH);

for(unsigned int p = 0; p < 3; p++){

//Select the start and endpoints for the

//current path.

Vector3d startPoint = paths[p][0];

Vector3d endPoint = paths[p][1];

//Loop over a single path.

for(

unsigned int n = 0;

n < K_POINTS_PER_PATH;

n++

){

//Interpolate between the paths start

//and end point.

Vector3d k = (

interpolator[n]*endPoint

+ (1 - interpolator[n])*startPoint

);

//Get the Index representation of the

//current k-point.

Index kIndex = brillouinZone.getMinorCellIndex(

{k.x, k.y},

numMeshPoints

);

//Extract the eigenvalues for the

//current k-point.

bandStructure[

{0, n+p*K_POINTS_PER_PATH}

] = propertyExtractor.getEigenValue(

kIndex,

0

);

bandStructure[

{1, n+p*K_POINTS_PER_PATH}

] = propertyExtractor.getEigenValue(

kIndex,

1

);

}

}

Here we first set up the array ‘bandStructure’ that is to contain the band structure with two eigenvalues per k-point. The variable ‘interpolator’ is then defined, which is an array of values from 0 to 1 that will be used to interpolate between the paths start and endpoints. In lines 9 and 10 we then select the start and endpoints for the current path, and the loop over this path begins in line 13.

In lines 20-23, we calculate an interpolated k-point value between the first and last points in the given path. Similarly, as when setting up the model, we then request the Index that corresponds to the given k-point. Having obtained this Index, we extract the lowest eigenvalue for the corresponding block in lines 34-39 and store it in the array ‘bandStructure’. Similarly, the highest eigenvalue is obtained for the same block in lines 40-45.

Next, we calculate the maximum and minimum value of the band structure along the calculated paths. We do this to allow for drawing vertical lines of the correct height at the M and K points when plotting the data.

double min = bandStructure[{0, 0}];

double max = bandStructure[{1, 0}];

for(

unsigned int n = 0;

n < 3*K_POINTS_PER_PATH; n++ ){ if(min > bandStructure[{0, n}])

min = bandStructure[{0, n}];

if(max < bandStructure[{1, n}])

max = bandStructure[{1, n}];

}

Finally, we plot the band structure.

plotter.clear();

plotter.setLabelX("k");

plotter.setLabelY("Energy");

plotter.plot(

bandStructure.getSlice({0, _a_}),

{{"color", "black"}}

);

plotter.plot(

bandStructure.getSlice({1, _a_}),

{{"color", "black"}}

);

plotter.plot(

{K_POINTS_PER_PATH, K_POINTS_PER_PATH},

{min, max},

{{"color", "black"}}

);

plotter.plot(

{2*K_POINTS_PER_PATH, 2*K_POINTS_PER_PATH},

{min, max}

{{"color", "black"}}

);

plotter.save("figures/BandStructure.png ");

Here the ‘_a_’ flag in the call to bandStructure.getSlice({0, _a_}, {{“color”, “black”}}) on line 5 (and 6) is a wildcard. It indicates that we want to get a new array that contains all the elements of the original array that has a zero in the first index and an arbitrary index in the second. ‘a’ stands for ‘all’.

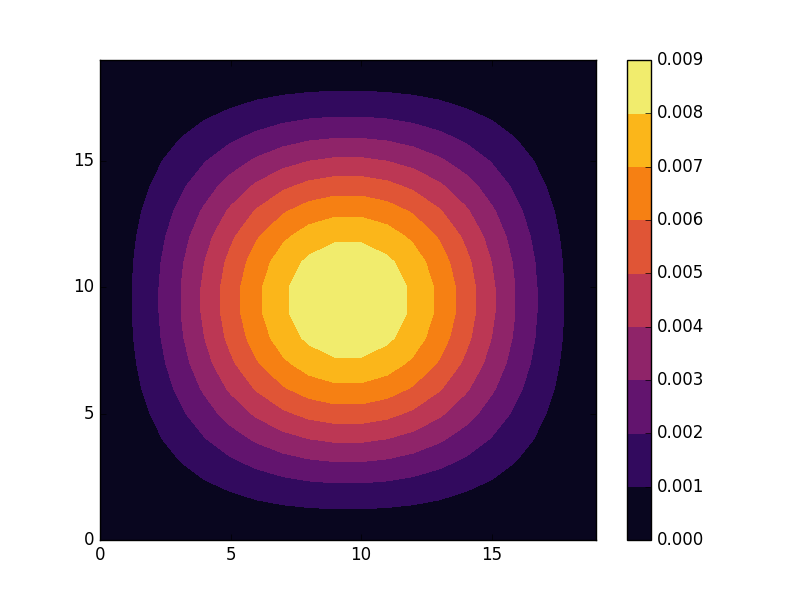

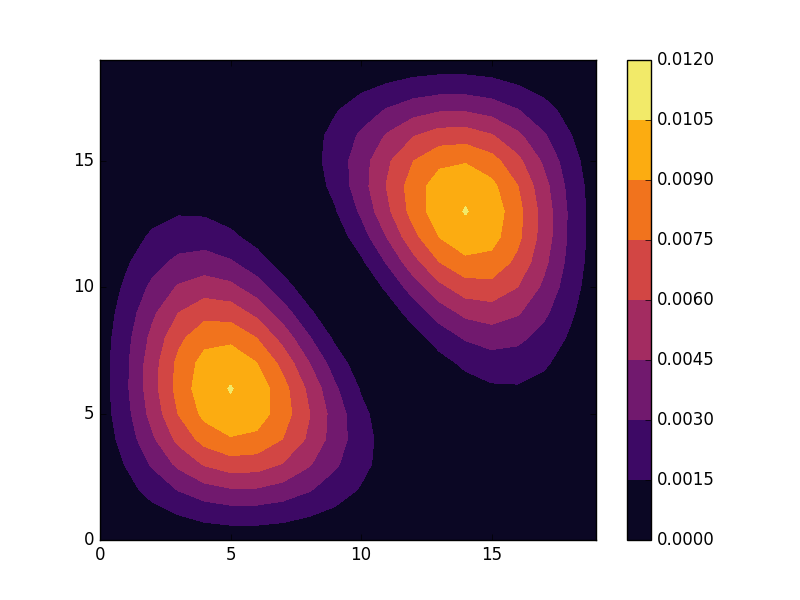

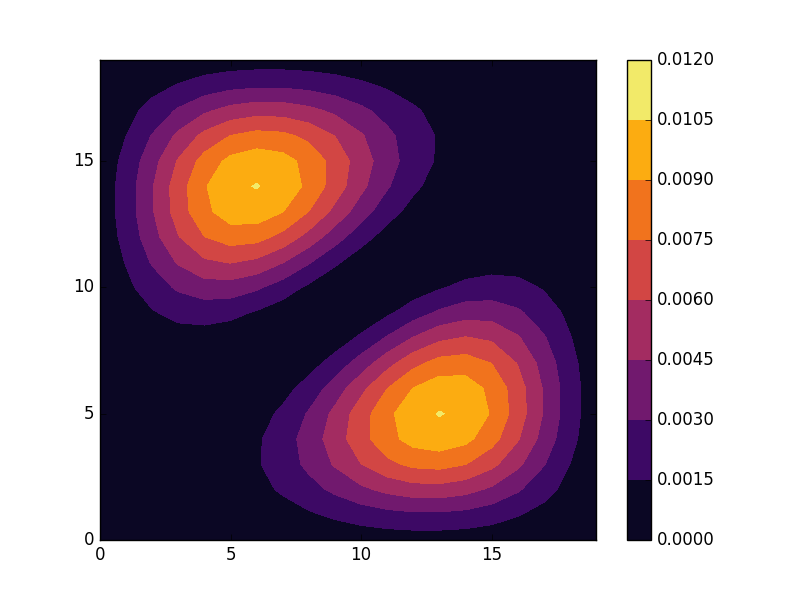

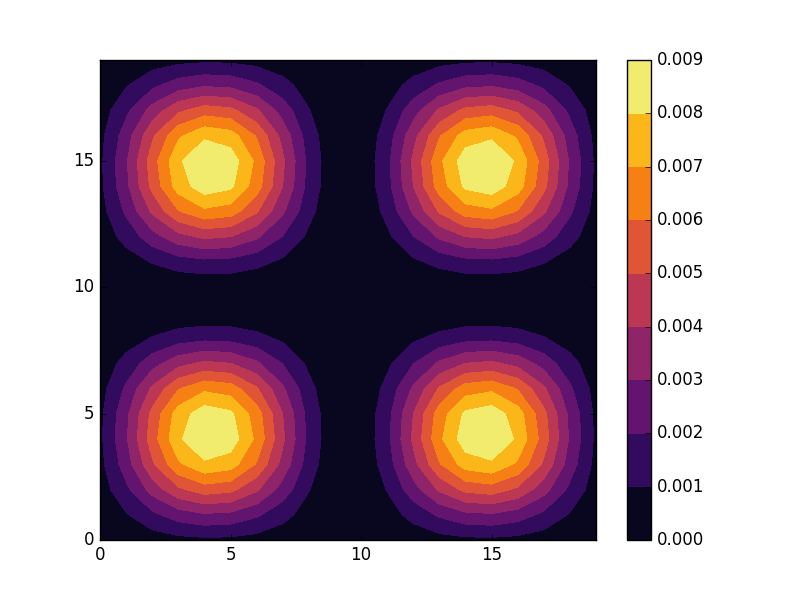

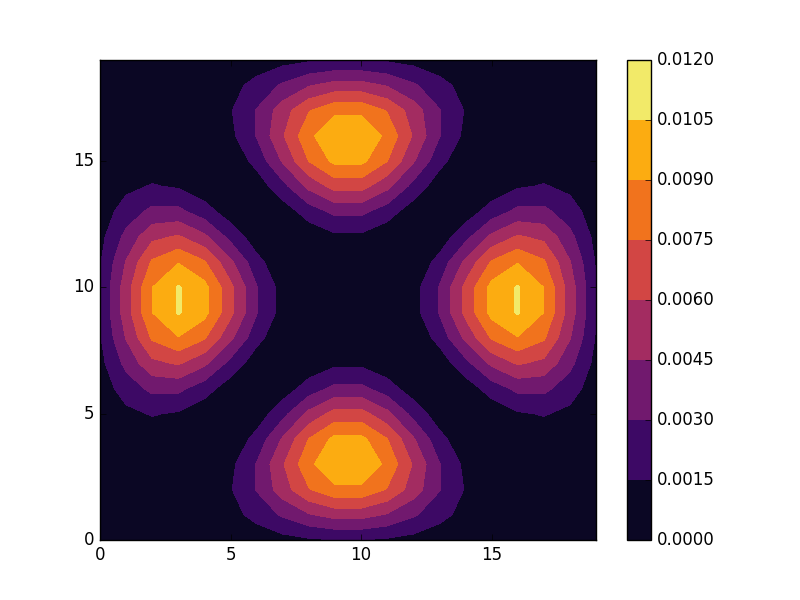

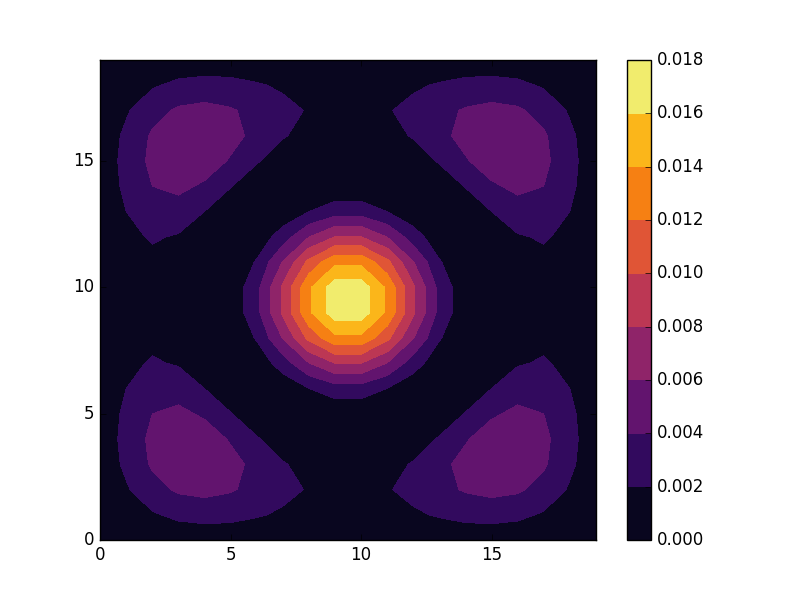

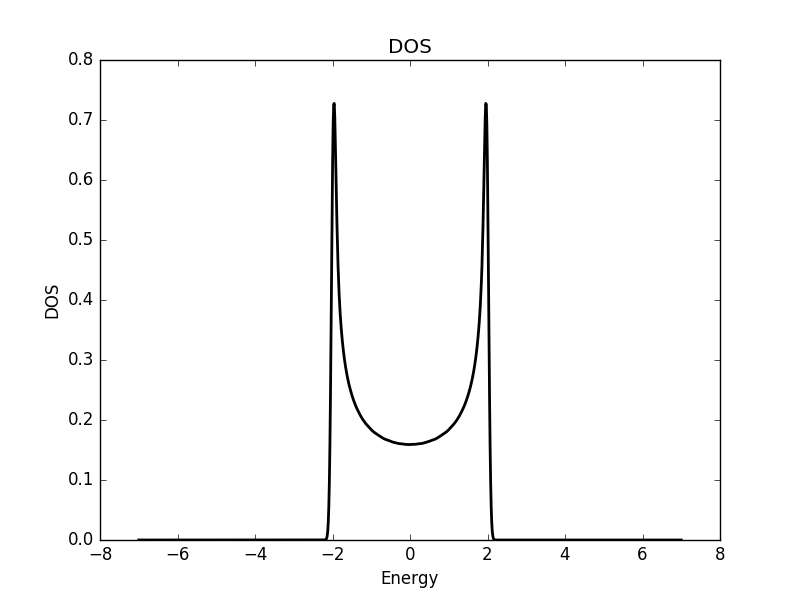

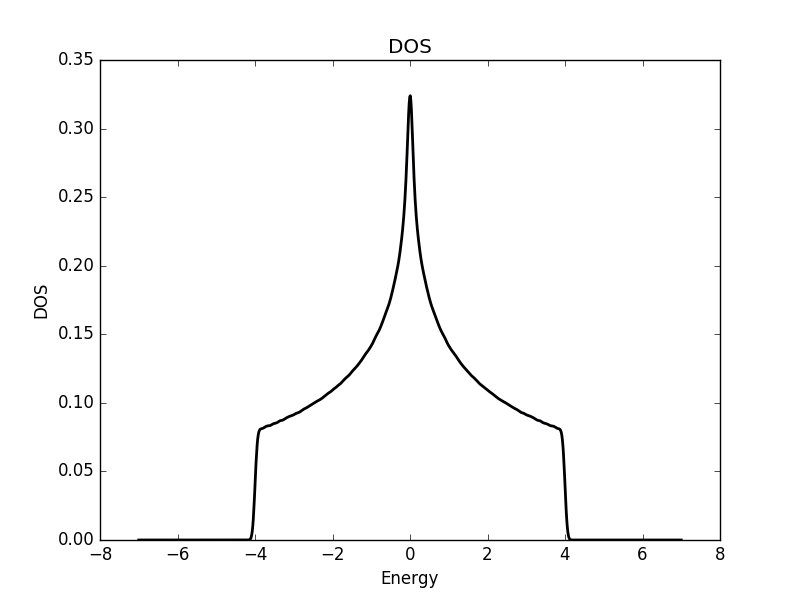

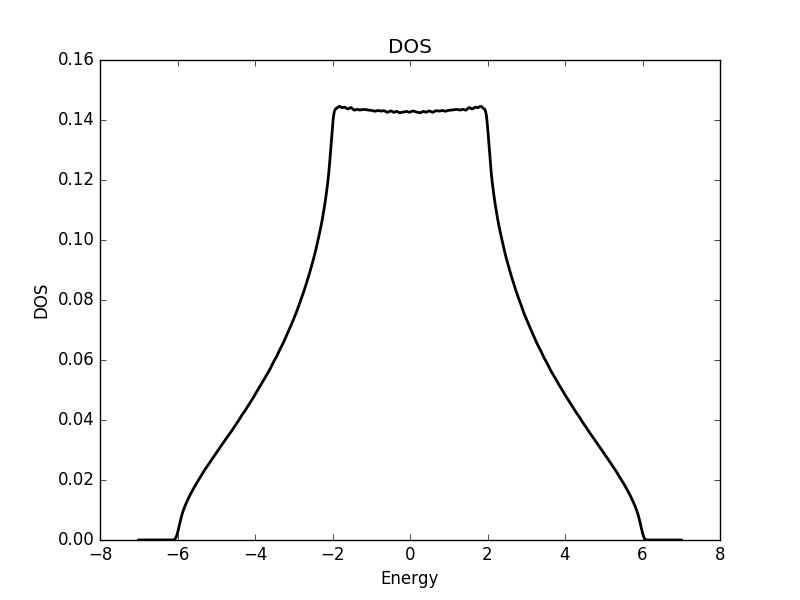

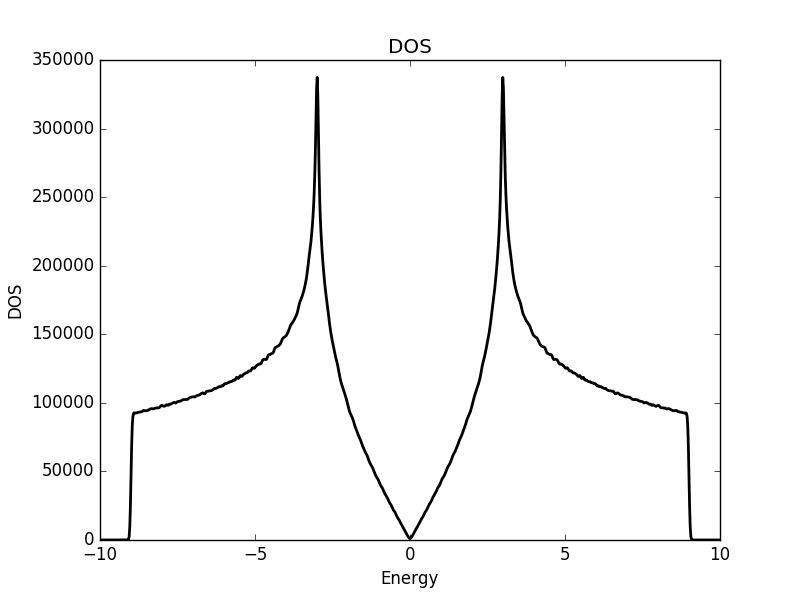

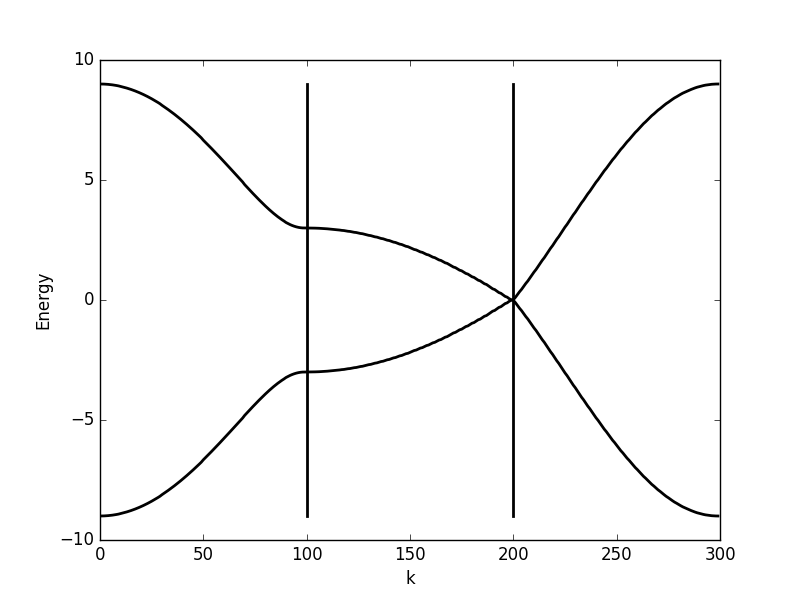

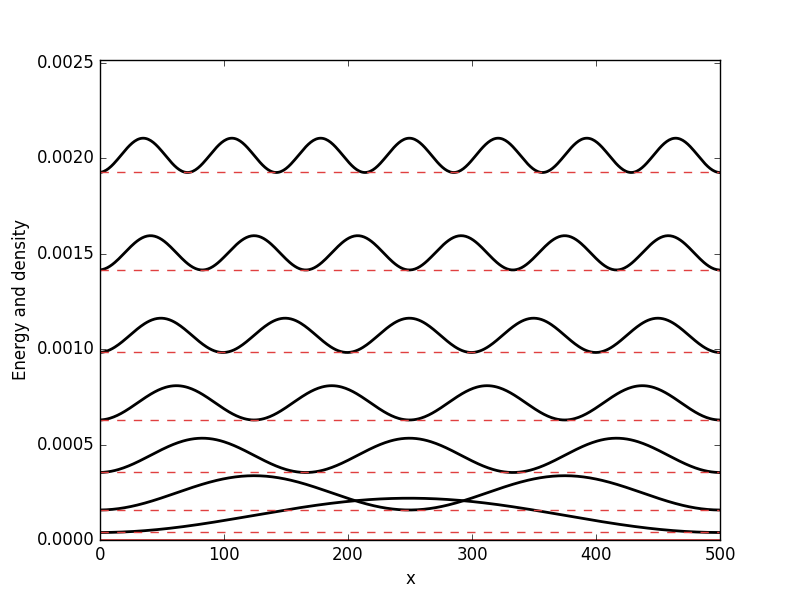

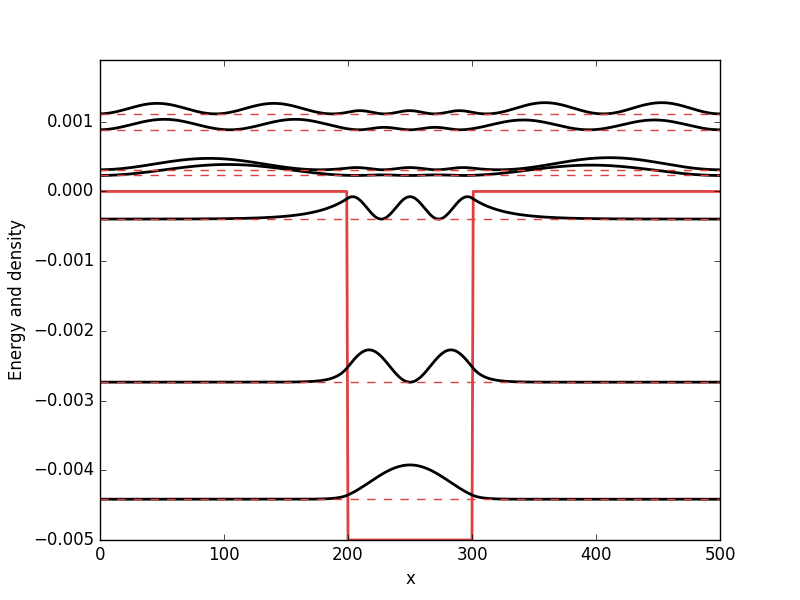

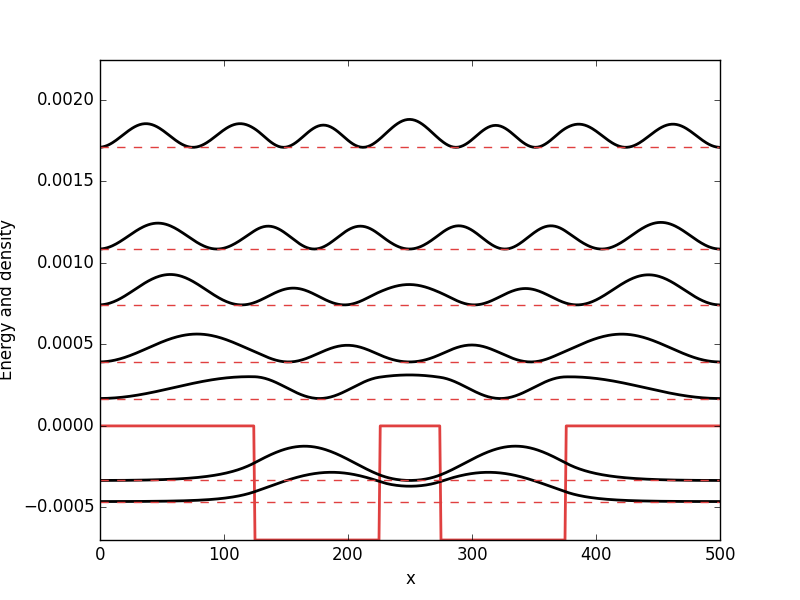

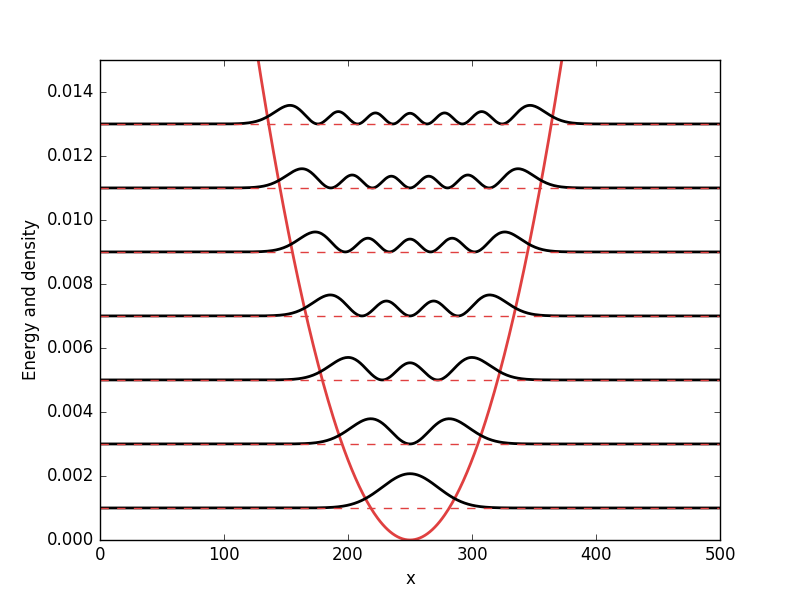

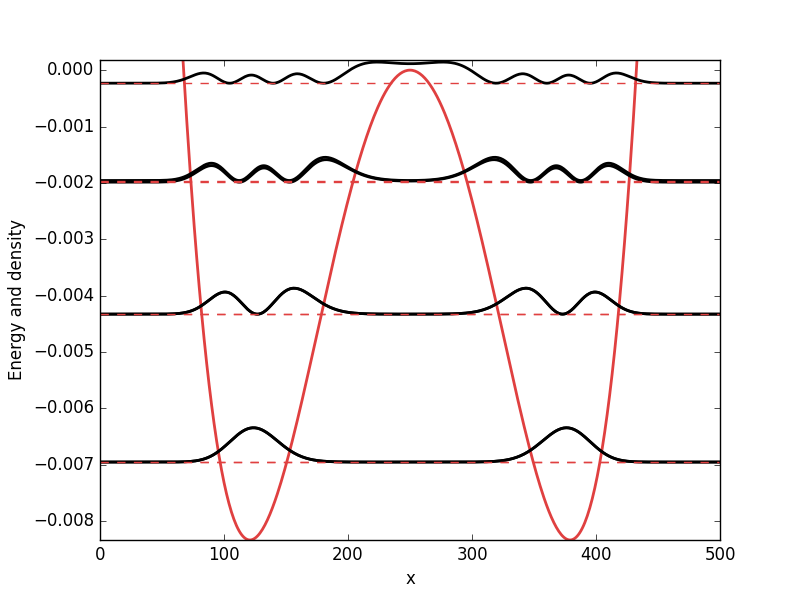

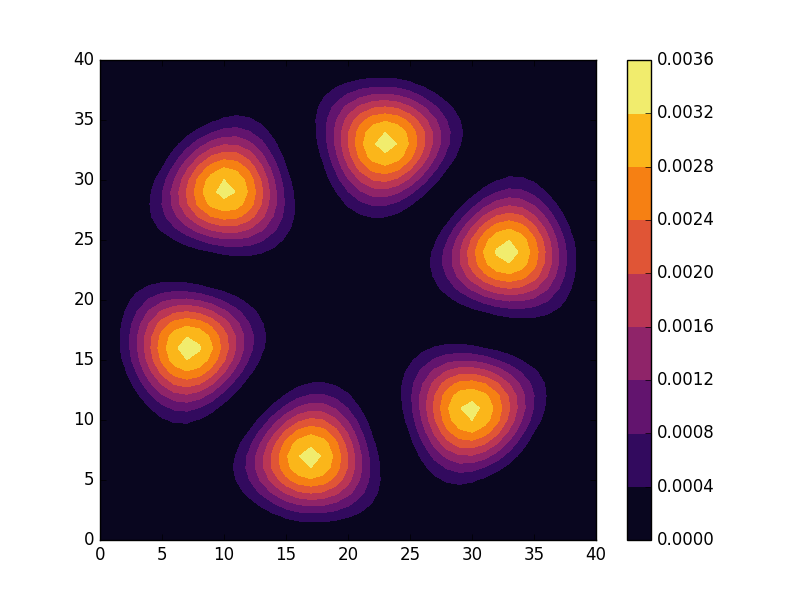

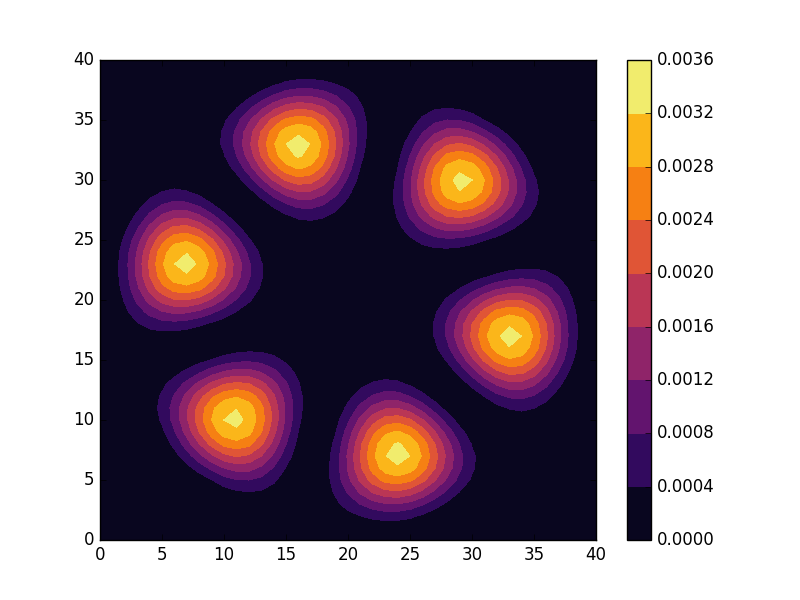

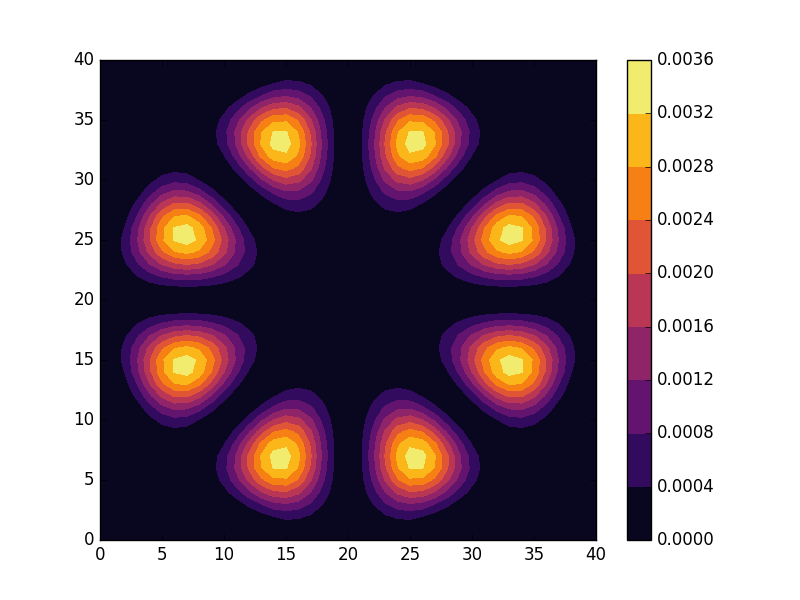

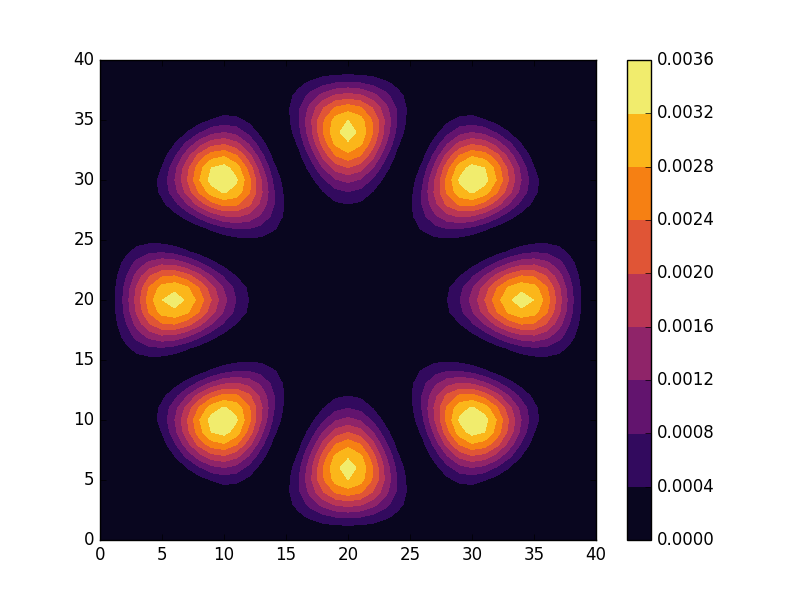

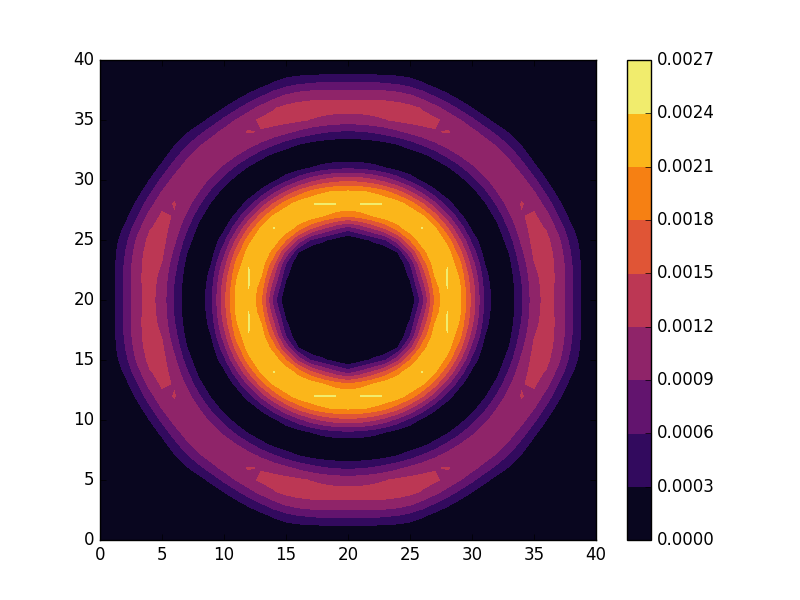

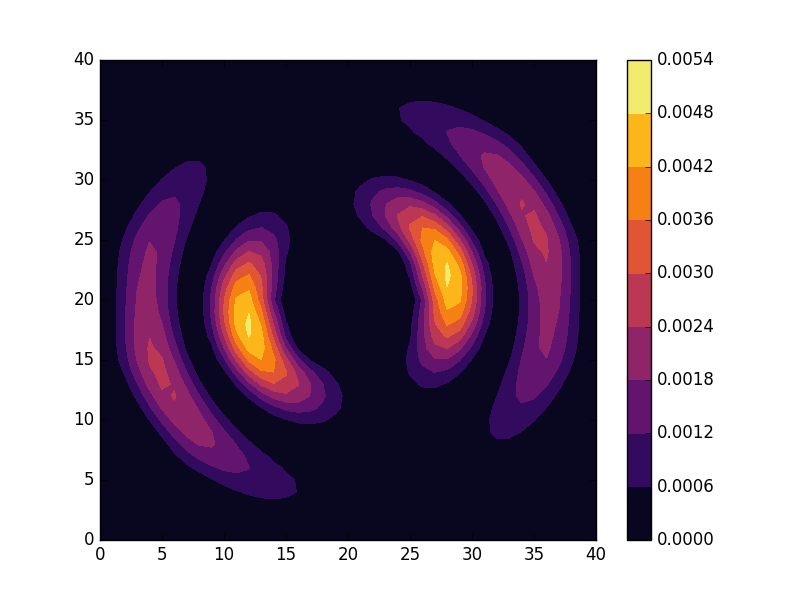

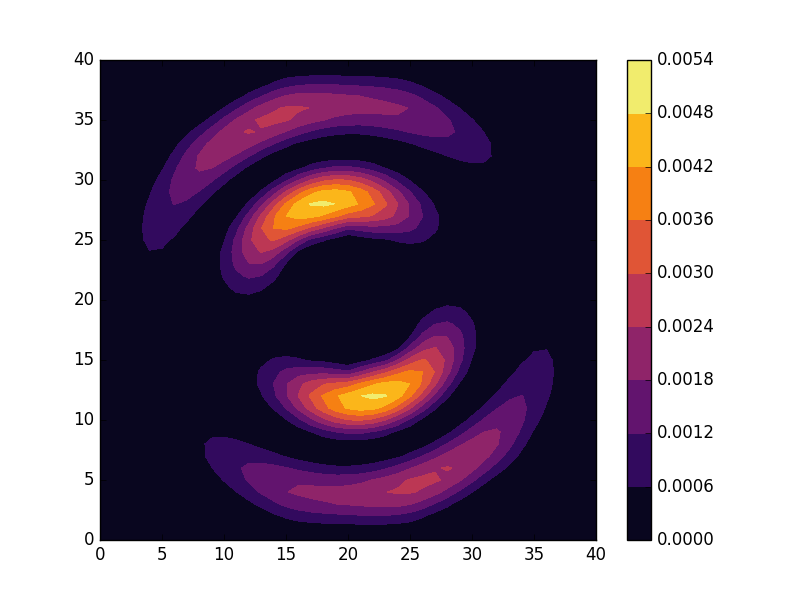

Results

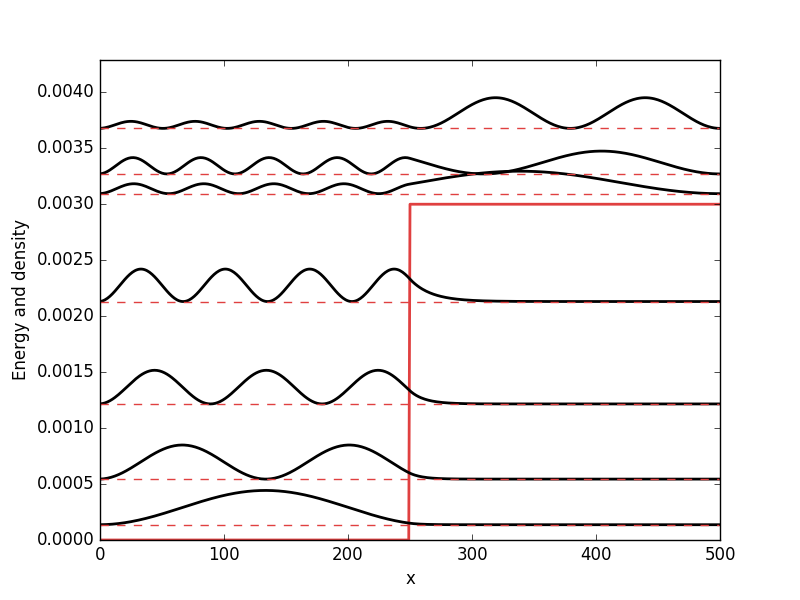

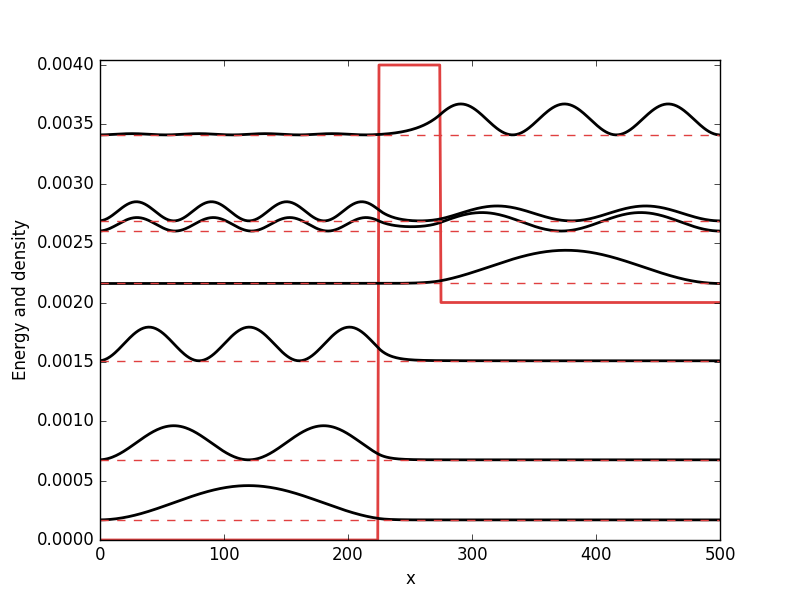

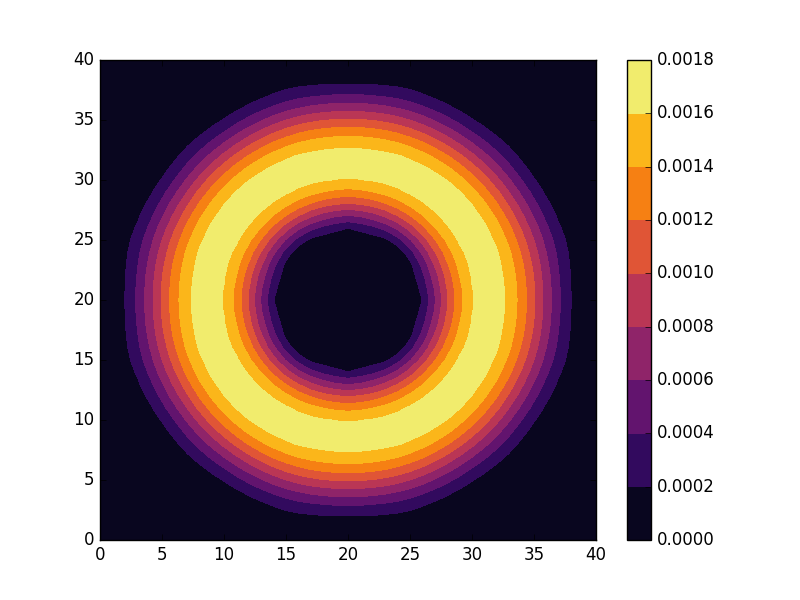

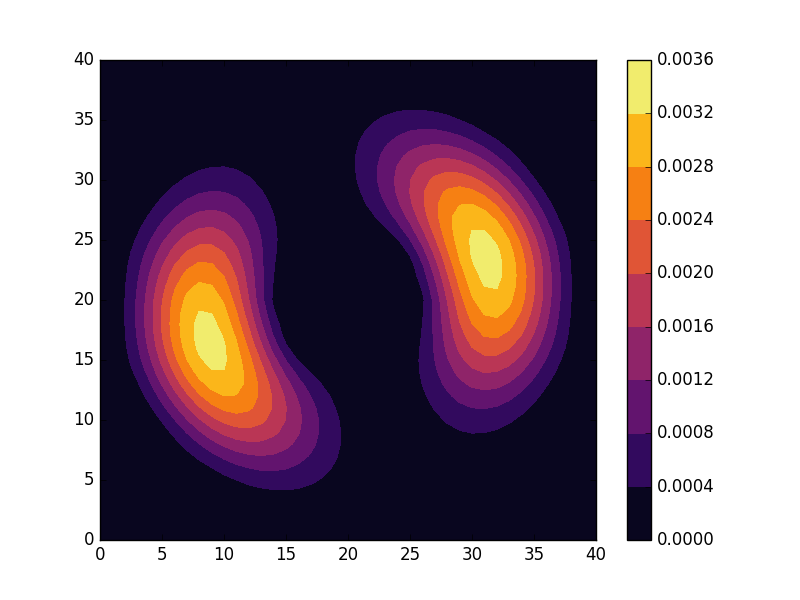

Below the results are shown. The DOS is characteristically v-shaped around ![]() and is peaked 1/3 of the way to the band edge. In the band structure, we can see the Dirac cone at the K-point (the second vertical line). The low density of states at

and is peaked 1/3 of the way to the band edge. In the band structure, we can see the Dirac cone at the K-point (the second vertical line). The low density of states at ![]() can be understood as a consequence of the small number of k-points around the K-point for which the energy is close to zero. The cone has a finite slope, and therefore only a single k-point is at

can be understood as a consequence of the small number of k-points around the K-point for which the energy is close to zero. The cone has a finite slope, and therefore only a single k-point is at ![]() (one more point is at the

(one more point is at the ![]() -point, which we have not considered here but which is at another corner of the Brillouin zone). Compare this with the flat region at the M-point (first vertical line), which results in a significant amount of k-points with roughly the same energy. This flat region is responsible for the strong peaks in the DOS.

-point, which we have not considered here but which is at another corner of the Brillouin zone). Compare this with the flat region at the M-point (first vertical line), which results in a significant amount of k-points with roughly the same energy. This flat region is responsible for the strong peaks in the DOS.

DOS

Band structure

Full code

The full code is available in src/main.cpp in the project 2019_07_05 of the Second Tech code package. See the README for instructions on how to build and run.

![Rendered by QuickLaTeX.com \[ \begin{aligned} ... & ...\\ \frac{df(x_n)}{dx} &\approx \frac{f(x_{n+1}) - f(x_{n-1})}{2\Delta x},\\ \frac{df(x_{n+1})}{dx} &\approx \frac{f(x_{n+2}) - f(x_n)}{2\Delta x},\\ ... & ... \end{aligned} \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-bc3b6122c3f8797d780bfa466c093aa6_l3.png)

![Rendered by QuickLaTeX.com \[\left[\begin{array}{c} ...\\ \frac{df(x_n)}{dx}\\ \frac{df(x_{n+1})}{dx}\\ ... \end{array}\right] \approx D^{(1)}\left[\begin{array}{c} ...\\ f(x_n)\\ f(x_{n+1})\\ ... \end{array}\right], \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-c3f75bb9206cd612679e2c3e39cf8a1f_l3.png)

![Rendered by QuickLaTeX.com \[ D^{(1)} = \frac{1}{2\Delta x}\left[\begin{array}{cccccc} ... & ... & ... & ... & ... & ...\\ ... & -1 & 0 & 1 & 0 & ...\\ ... & 0 & -1 & 0 & 1 & ...\\ ... & ... & ... & ... & ... & ... \end{array}\right].\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-d12f8ad72b2c77d0dd06ce24371e9770_l3.png)

![Rendered by QuickLaTeX.com \[\begin{aligned} \frac{df(x_{0})}{dx} &\approx \frac{f(x_1) - 0}{2\Delta x},\\ \frac{df(x_{N-1})}{dx} &\approx \frac{0 - f(x_{N-2})}{2\Delta x}. \end{aligned}\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-4537d9e33c519464c7cf3fee10af2df9_l3.png)

![Rendered by QuickLaTeX.com \[\begin{aligned} \frac{df(x)}{dx} &= \lim_{\Delta x \rightarrow 0}\frac{f(x + \Delta x) - f(x)}{\Delta x},\\ \frac{df(x)}{dx} &= \lim_{\Delta x \rightarrow 0}\frac{f(x + \Delta x) - f(x - \Delta x)}{2\Delta x},\\ \frac{df(x)}{dx} &= \lim_{\Delta x \rightarrow 0}\frac{f(x) - f(x - \Delta x)}{\Delta x}. \end{aligned}\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-ddc79e8e373525ac60051f252a75c23d_l3.png)

![Rendered by QuickLaTeX.com \[\left[\begin{array}{c} ...\\ \frac{d^2f(x_n)}{dx^2}\\ \frac{d^2f(x_{n+1})}{dx^2}\\ ... \end{array}\right] \approx D^{(2)}\left[\begin{array}{c} ...\\ f(x_n)\\ f(x_{n+1})\\ ... \end{array}\right], \]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-670d76e00be6e69d68f6eba8f70e4e69_l3.png)

![Rendered by QuickLaTeX.com \[ D^{(2)} = \frac{1}{\Delta x^2}\left[\begin{array}{cccccc} ... & ... & ... & ... & ... & ...\\ ... & 1 & -2 & 1 & 0 & ...\\ ... & 0 & 1 & -2 & 1 & ...\\ ... & ... & ... & ... & ... & ... \end{array}\right].\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-8071a3931503033ac032e660a669dc01_l3.png)

![Rendered by QuickLaTeX.com \[\begin{aligned} H_{S} &= \frac{-\hbar^2}{2m}\left(\frac{\partial^2}{\partial x^2} + \frac{\partial^2}{\partial y^2}\right) + V(x,y)\\ &= \frac{-\hbar^2}{2m}\left(\frac{1}{r}\frac{\partial}{\partial r}\left(r\frac{\partial}{\partial r}\right) + \frac{1}{r^2}\frac{\partial^2}{\partial \theta^2}\right) + V(r, \theta), \end{aligned}\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-792d9a388f86a4b5574a9d21ca16cb5d_l3.png)

![Rendered by QuickLaTeX.com \[\begin{aligned} -t&\left(c_{(x+1,y)}^{\dagger}c_{(x,y)} + c_{(x-1,y)}^{\dagger}c_{(x,y)}\right.\\ &+\left.c_{(x,y+1)}^{\dagger}c_{(x,y)} + c_{(x,y-1)}^{\dagger}c_{(x,y)}\right). \end{aligned}\]](http://second-tech.com/wordpress/wp-content/ql-cache/quicklatex.com-1477295d3655ca6b13ba5e674468ec78_l3.png)